As transactional systems scale, databases quietly accumulate years of historical records—most of which are rarely queried. Yet, this “cold data” continues to consume expensive SSD storage, inflate indexes, and slow down critical operations.

In this post, we’ll walk through how we reduced our MySQL storage by 80%, improved query performance, and built a low-cost analytics layer using S3 + Athena—without losing SQL query capabilities.

We had a very similar problem recently. One of our database tables was growing rapidly in size, with almost 500,000 rows being added daily. To make matters worse, it had data since 2018. We were doing only a few aggregation queries daily on this data. We realized we were basically running a data warehouse workload on a transactional database.

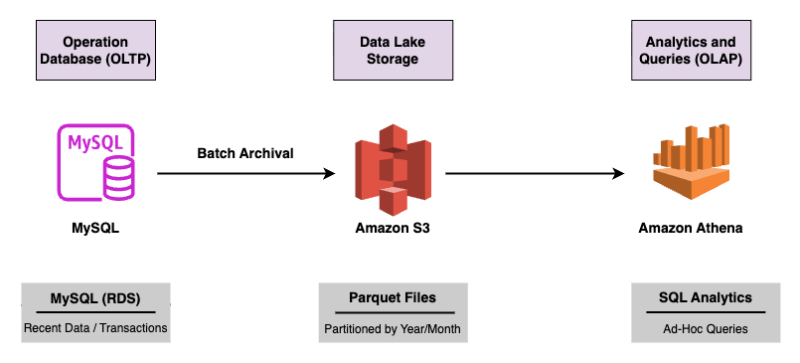

The core idea is simple: move cold data to inexpensive object storage and query it only when needed. We looked at different solutions: S3+Athena vs ClickHouse vs Redshift Serverless. ClickHouse required managing servers and had non-trivial operational overhead. Redshift Serverless was costlier for our infrequent query pattern. For our use case, S3+Athena seemed to be the best fit because:

Amazon Simple Storage Service (Amazon S3) is an object storage service from AWS. Amazon Athena is a serverless, interactive query service that makes it easy to analyze data directly in Amazon S3 using standard SQL. It requires no infrastructure setup, scales automatically, and charges only for the data scanned per query. It supports formats like CSV, JSON, ORC, Avro, and Parquet.

We chose Parquet as our storage over JSON or CSV. Apache Parquet is an open-source, column-oriented binary file format designed for efficient data storage and high-performance analytics. By storing data by column rather than row, Parquet enables significant compression, reduced I/O, and fast data retrieval. We chose the built-in snappy compression because it’s optimized for a balance of compression ratio and CPU performance, allowing Athena to decompress data rapidly during a scan. Parquet supports predicate pushdown, allowing query engines like Athena to skip irrelevant data files or row groups, resulting in lower scan costs. It uses metadata—min/max statistics—within Parquet files to read only relevant data, significantly reducing I/O operations, network transfer, and memory usage. We decided to partition the data on year and month because that led to ideal parquet file sizes as well as query optimization for our use case.

We have partitioned the s3 data by year and month.

s3://bucket/

year=2024/

month=11/

data_*.parquetThis helps us in partition pruning, reducing Athena scan cost, and keeping parquet size manageable.

Athena expects partitioned data to follow a Hive-style layout (e.g., year=2026/month=2/). Without this, partition discovery (via MSCK REPAIR TABLE) will not work. Small parquet files hurt Athena’s performance, so we use a “Compaction” step to merge these into ~256MB files once a month to keep queries lightning fast and cheap. Athena performs best in this file size range due to reduced file open overhead and efficient parallelism.

Our principle for the migration was very clear from the beginning - never delete from RDS until this new workflow is fully validated. We planned to run the new workflow in parallel for a few days, and validate the data before deleting it from RDS. We validated by comparing:

- Row counts between RDS and Athena

- Results of our daily aggregation query on both

- Spot-checking sampled records across both systems for data consistency

- Comparing checksums/hashes for subsets of data

We decided to migrate in two phases:

Initial Bulk Load - Since we did not have to perform any transformations on the data and simply wanted to export the data from a MySQL table to Parquet, we decided to write a simple Python script to partition the data month-wise and upload parquet files for each month in S3.

The Daily Delta - Once the historical data was exported, we modified the above script to upload daily archival data to S3.

Since the dataset was large, we fetched data in batches from MySQL and streamed it directly to S3. Find a sample code below:

with db_helpers.read_cursor() as cursor:

cursor.execute(

f"select * from xyz where date < date(date_add(now(), INTERVAL -30 day))"

)

fs = pa.fs.S3FileSystem(

region=aws_region,

access_key=aws_s3_access_key,

secret_key=aws_s3_secret_access_key,

)

writer = None

while True:

rows = cursor.fetchmany(BATCH_SIZE)

if not rows:

break

# convert batch to arrow table

cols = list(zip(*rows))

arrays = [pa.array(col, type=field.type) for col, field in zip(columns, schema)]

table = pa.Table.from_arrays(arrays, schema=SCHEMA)

if writer is None:

path = (

f"bucket/"

f"year={archive_date.year}/"

f"month={archive_date.month}/"

f"data_{int(archive_date.timestamp())}.parquet"

)

# Initialize Parquet writer (once per file)

writer = pq.ParquetWriter(

path,

SCHEMA,

filesystem=fs,

compression="snappy",

)

writer.write_table(table)

# Close writer after all batches

if writer:

writer.close()We need to first create the table in Athena so that it can map the data in parquet file

CREATE EXTERNAL TABLE xyz (

xyz string,

…

)

PARTITIONED BY (

year int,

month int

)

STORED AS PARQUET

LOCATION 's3://bucket/'Note that Athena does not automatically discover new partitions in S3, and we may have to add the partitions manually.

For historical data load we can do:

MSCK REPAIR TABLE xyz;MSCK REPAIR TABLE scans the entire S3 prefix every time. For tables with years of partitions this can take several minutes. So, for incremental partitions, we can just add the specific partition:

ALTER TABLE xyz

ADD IF NOT EXISTS PARTITION (year={archive_date.year}, month={archive_date.month})

LOCATION 's3://bucket/year={archive_date.year}/month={archive_date.month}'Once we have the Athena Table ready, we can simply query the Athena table using SQL

SELECT count(*)

FROM xyz

WHERE year = 2024

AND month = 11;We observed ~5x compression using Parquet with Snappy. Combined with partition pruning, Athena queries scanned significantly less data—reducing both latency and cost.

| Metric | Before (RDS) | After (S3 + Athena) |

|---|---|---|

| Storage Size | 500 GB | 100 GB (Compressed) |

| Storage Cost | ~$57.50 / month | ~$2.3 / month |

| Query Speed | Minutes (Index bloat) | Seconds (Columnar scan) |

| Maintenance | High (Indexing, backups, replication overhead) | Zero (Serverless) |

By separating OLTP and OLAP workloads, we turned our MySQL database back into what it should be—a fast transactional system—while unlocking scalable, low-cost analytics on S3.

This pattern is simple, cost-effective, and highly scalable—but like all systems, it works best when applied to the right kind of data: append-heavy, rarely updated, and analytically queried.

Want to read more about how we think and build at Ipsator?